Communication-Aware Multi-Agent Exploration

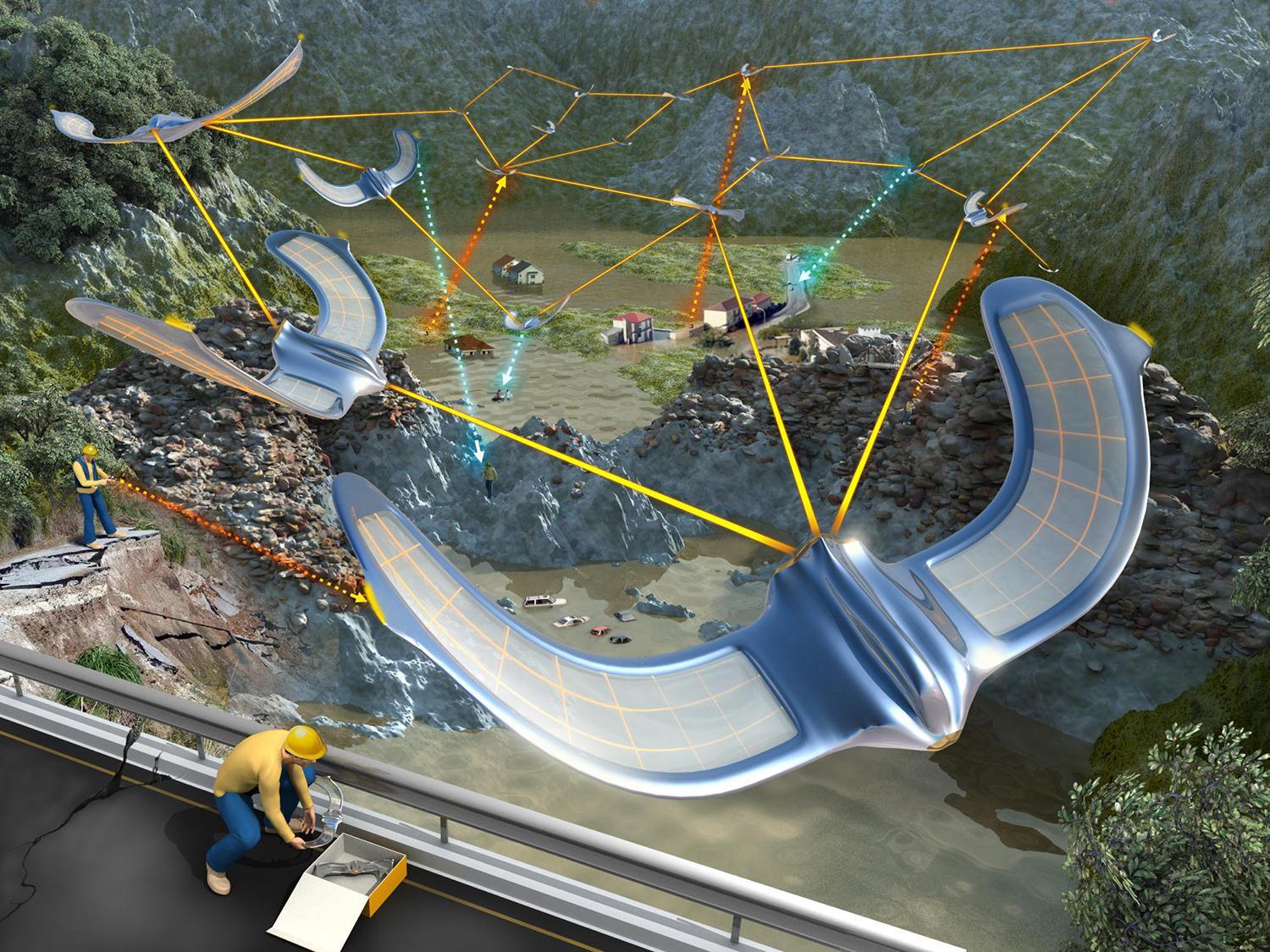

Multi-agent exploration of unknown environments is a well-established research area in robotics, with numerous applications such as search and rescue, planetary exploration, and underground mining. This allows for multiple agents to cover larger areas more quickly, while also providing improved fault tolerance and uncertainty compensation. However, communication limitations present significant challenges in real-world exploration missions. In large-scale environments, it is impractical for agents to possess global communication abilities, and transmitting vast amounts of sensor data can exceed network capacity. This often leads to communication delays or even imperfect information due to data loss, resulting in poor decisions made. Therefore, it is essential to develop systems that operate effectively under communication constraints.

Information sharing in multi-agent systems can be divided into three categories. The first category involves agents focusing on exploration while leaving connectivity to chance, which can lead to poor decisions due to incomplete environmental knowledge. The second category requires agents to maintain continuous connectivity during exploration, potentially sacrificing efficiency. The third category permits agents to actively connect and disconnect, meeting at specific times and locations using conventional solvers. However, this approach can be brittle to environmental changes and may be time-consuming to compute.

To this end, we aim to develop novel coordination strategies that optimizes exploration efficiency by ensuring adequate connectivity at optimal locations. By employing attention-based reinforcement learning, agents makes long-term decisions on whether to connect or disconnect with other agents based on the partial map information acquired during the exploration process. Furthermore, we plan to implement our work on real robots, utilizing actual signal strength from radio or Wi-Fi networks.

Relevant Papers:

- IR2: Implicit Rendezvous for Robotic Exploration Teams under Sparse Intermittent Connectivity

- Privileged Reinforcement and Communication Learning for Distributed, Bandwidth-limited Multi-robot Exploration

People

Guillaume SARTORETTI

Yixiao MA (NUS-SoC)

Maxime DE MONTLEBERT