Autonomous Robotic Exploration and Search in Complex Environments

1. AI-based Single-Robot Autonomous Exploration

Robotic exploration can be used to survey and monitor the environment in challenging and remote areas, such as forests, oceans, and polar regions, providing valuable data for research and conservation purposes. In such situations, the use of unmanned ground vehicles (UGVs) for exploration and surveillance can provide a significant advantage. UGVs can operate in hazardous or inaccessible areas, gather critical information, and enhance situational awareness for the users.

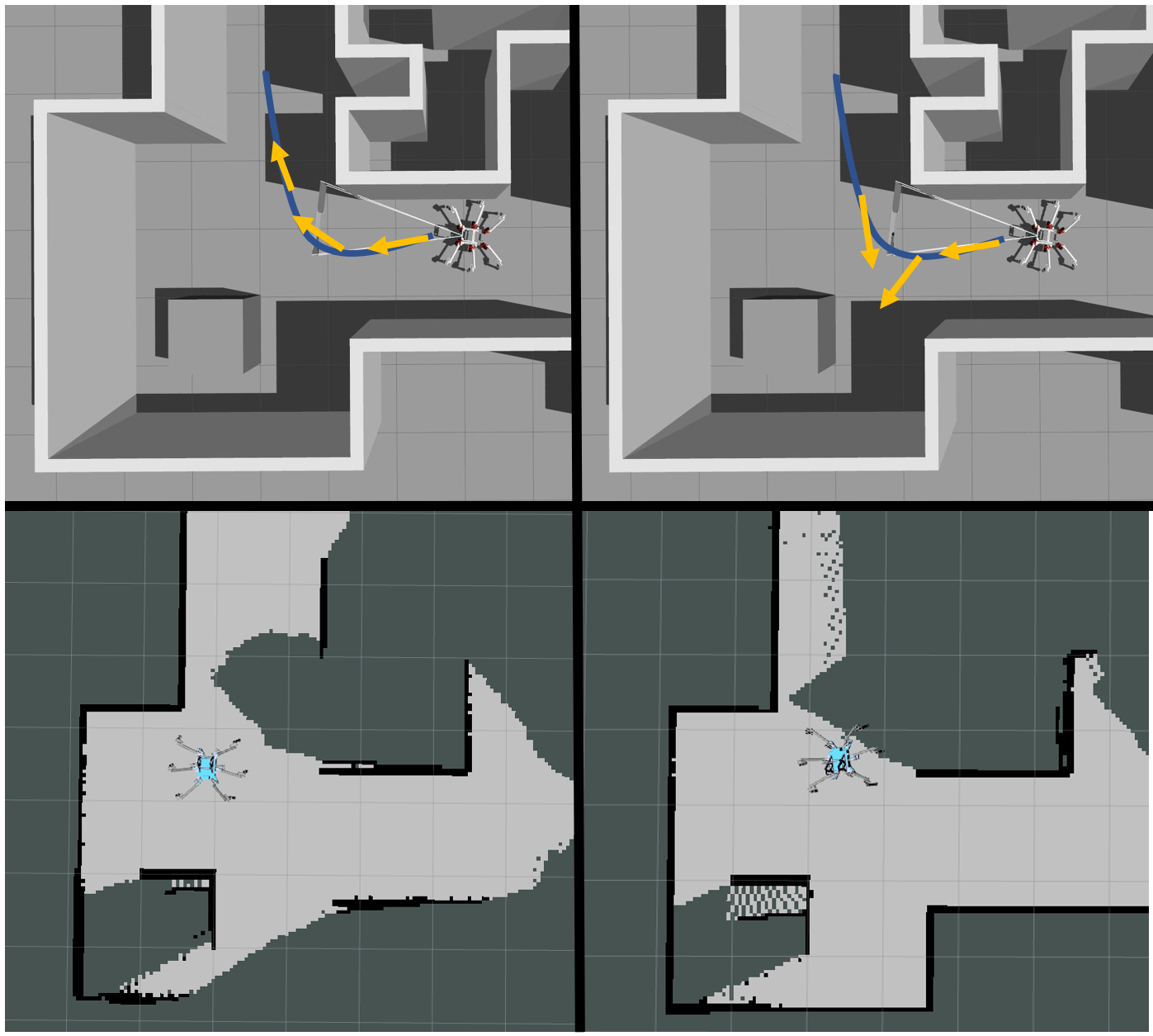

The main objective of this project is to develop an autonomous exploration system that uses Unmanned Ground Vehicles (UGVs) and Unmanned Aerial Vehicles (UAVs) to efficiently map and explore unknown environments. The project aims to develop a system that allows robots to work together seamlessly to explore challenging and dynamic environment, such as disaster zones, and conduct thorough mapping and surveying. The project will utilise state-of-the-art deep reinforcement learning (deepRL) algorithms to allow the robot to learn the environment and reason about its current state, so as to make an informed decision on where to explore or search next.

The project will involve testing on a high-fidelity simulation environment in order to refine the exploration algorithms. The simulation will be designed to mimic real-world environments, including various terrain types and obstacles, and will allow for the testing of different exploration strategies and algorithms. Real-life implementation of the developed algorithm on UGVs will also be conducted to further vlaidate the practicality of our algorithm and demonstrate its robustness.

The project will utilize various technologies and techniques, including deep reinforcement learning, multi-agent cooperation and perception. The project will contribute to the development of a exploration robotic system that can enhance situational awareness and provide critical information to first responders in emergency situations. The project will also study and idnetify the potential challenges and limitations of UGV/UAV technology in deploying them in situations that are deemed dangerous for humans to operate in.

Related recent publications:

- ARiADNE: A Reinforcement learning approach using Attention-based Deep Networks for Exploration

- CAtNIPP: Context-Aware Attention-based Network for Informative Path Planning

- DAN: Decentralized Attention-based Neural Network to Solve the MinMax Multiple Traveling Salesman Problem

2. Autonomous Exploration through Active Perception for Legged Robots

Legged robots are often overactuated, which means that they have more degrees of freedom (DOFs) than necessary. This allows us to enhance the robot’s capabilities during locomotion, such as stabilizing its body in rough terrain or controlling its translation and heading independently. In our recent work, we focused on omnidirectional control to enable a legged robot to follow simple paths while simultaneously tracking a static landmark using a camera attached to its body.

The aim of this project is to achieve autonomous exploration by optimizing the robot’s heading to maximize the information gathered from its onboard sensor, which has a limited field of view (e.g., a camera). The robot uses its partial map and motion constraints to plan sequences of gazes that are optimized for the coverage of currently unknown areas, ultimately maximizing the completeness of the final map. This project enables the robot to direct its vision sensor towards previously-occluded areas as it passes by them, looking sideways or even backwards as needed to maximize map completion from a single pass.

Related recent publications:

People

Guillaume SARTORETTI

Jimmy CHIUN

Ge SUN

Peizhuo LI